Wirestock Secures $23M Series A to Expand Multimodal Data Platform for AI Training

Wirestock has raised $23 million in Series A funding to scale its multimodal data platform that provides high-quality, ethically sourced datasets for training AI models. The platform connects creators with AI developers, offering diverse data types including images, videos, and text.

Wirestock, a platform that bridges the gap between creative professionals and artificial intelligence development, has announced the successful closure of a $23 million Series A funding round. The investment will be used to scale the company's multimodal data platform, which supplies high-quality, ethically sourced datasets for training AI models. This funding round was led by prominent venture capital firms, with participation from existing investors, signaling strong confidence in Wirestock's mission to democratize AI training data.

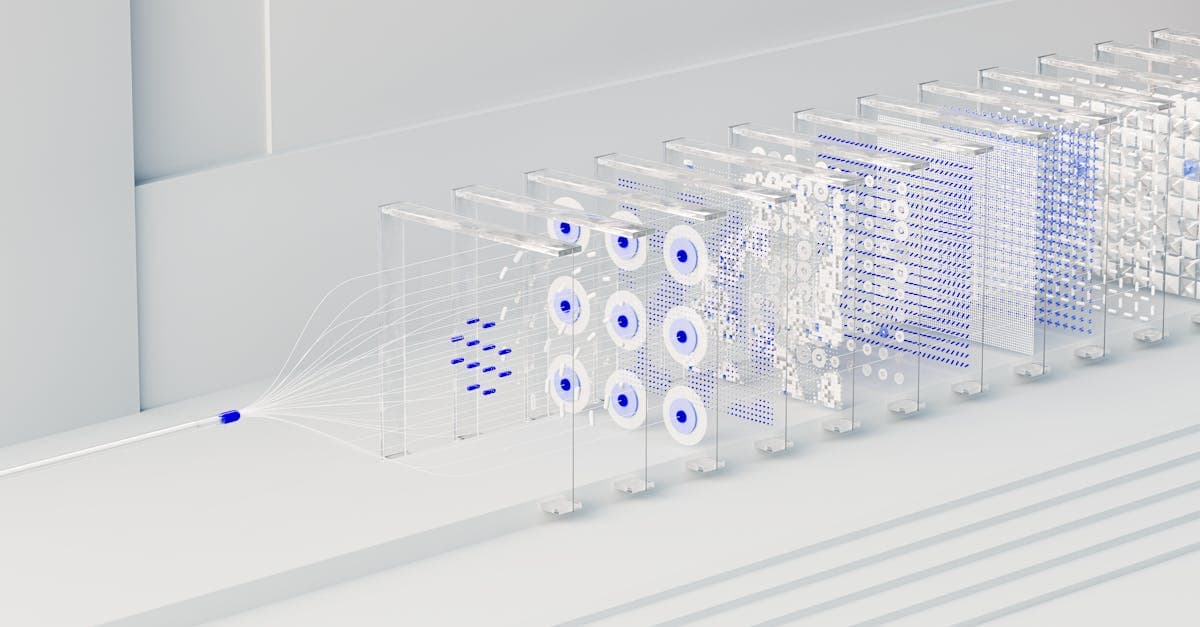

The Wirestock platform operates as a marketplace where creators—photographers, videographers, and writers—can upload their work and license it for AI training purposes. The datasets are curated to include a wide variety of modalities, such as images, videos, text, and audio, ensuring that AI models can learn from diverse and representative data. Wirestock employs rigorous quality control and ethical sourcing practices, including clear attribution and compensation for creators, addressing common concerns about data provenance in AI.

The company's technology includes automated tagging, metadata enrichment, and data filtering tools that help AI developers quickly find relevant datasets. The platform also offers custom dataset creation services, where clients can specify requirements for niche applications like medical imaging or autonomous driving. By streamlining the data acquisition process, Wirestock aims to reduce the time and cost associated with building robust AI training pipelines.

The $23 million Series A comes at a time when the AI industry faces increasing scrutiny over data sourcing and copyright issues. Wirestock's approach provides a legal and ethical alternative to scraping data from the internet without consent. The platform has already partnered with several major AI research labs and startups, providing them with curated datasets that comply with evolving regulations.

For creators, Wirestock offers a new revenue stream by monetizing their work in the growing AI sector. Contributors retain ownership of their content and receive royalties each time their data is used in a training set. This model has attracted a large community of photographers and videographers, particularly those specializing in stock imagery, who see AI licensing as a natural extension of their existing business.

The funding will be used to expand Wirestock's engineering team, improve platform scalability, and develop new features like real-time data streaming and synthetic data generation. The company also plans to increase its global outreach, targeting creators in emerging markets where high-quality visual content is abundant but monetization opportunities are limited.

Wirestock's platform is currently available worldwide, with support for multiple languages and currencies. Pricing for AI developers varies based on dataset size and complexity, with options for one-time purchases or subscription-based access. The company has not disclosed specific pricing tiers but emphasizes that its model offers cost savings compared to traditional data collection methods.

Looking ahead, Wirestock aims to establish itself as a standard for ethical data sourcing in the AI industry. Future developments may include integration with popular AI frameworks and tools, as well as partnerships with cloud service providers. The company is also exploring ways to use blockchain technology for transparent royalty tracking and data lineage, though no timeline has been announced for these initiatives.

Self-Improving AI Startup Raises $650 Million to Build Recursive Superintelligence

Richard Socher has launched Recursive Superintelligence, a San Francisco startup with $650 million in funding, aiming to create AI systems that can improve themselves without human intervention. The company's ambitious goal is to develop recursive self-improving AI that could fundamentally reshape technology and industry.

Richard Socher, a prominent figure in artificial intelligence, has unveiled Recursive Superintelligence, a new San Francisco-based startup that has already secured $650 million in funding. The company's mission is to develop self-improving AI systems capable of enhancing their own capabilities without human input, a concept known as recursive self-improvement. This ambitious project aims to create AI that can autonomously learn and evolve, potentially leading to superintelligent systems that far surpass current AI capabilities.

The core technology behind Recursive Superintelligence involves building AI models that can modify their own architectures, training data, and learning algorithms. Unlike traditional AI, which requires human engineers to refine models, these systems would continuously optimize themselves, accelerating progress exponentially. The startup plans to use large-scale neural networks and reinforcement learning to create a feedback loop where the AI improves its performance with each iteration, ultimately achieving levels of intelligence beyond human comprehension.

Recursive self-improvement has long been a theoretical concept in AI research, often discussed in the context of the technological singularity. Socher's approach differs from other AI companies by focusing on creating a general-purpose recursive improvement framework rather than specialized applications. The startup aims to develop a foundational AI system that can be applied across industries, from scientific research to software development, potentially revolutionizing how complex problems are solved.

The $650 million funding round is one of the largest ever for an AI startup, reflecting investor confidence in Socher's vision and track record. Socher previously founded and led AI research at other successful ventures, including a notable stint at Salesforce as Chief Scientist. The funding will primarily be used to recruit top AI researchers and acquire cutting-edge computing infrastructure necessary for training recursive models.

If successful, Recursive Superintelligence could have profound implications for various sectors. In healthcare, self-improving AI might discover new drugs or optimize treatment plans faster than human researchers. In software engineering, it could write and debug code autonomously, accelerating development cycles. However, the startup also acknowledges the need for robust safety measures to ensure that recursive self-improvement remains aligned with human values and does not lead to unintended consequences.

The company is currently operating in stealth mode, with limited details about its specific technical approach or timeline. Socher has indicated that early prototypes are already showing promising results in controlled environments, but widespread deployment is likely years away. The startup plans to release initial research papers and open-source components to foster collaboration with the broader AI community.

Industry experts have expressed both excitement and caution about Recursive Superintelligence's goals. While the potential benefits are enormous, the risks of creating a self-improving AI without adequate safeguards are significant. The startup has established an ethics board and committed to transparency in its development process, though critics argue that the very nature of recursive improvement makes it difficult to predict or control outcomes.

Looking ahead, Recursive Superintelligence faces several challenges, including ensuring the safety of self-improving systems and managing public perception of superintelligent AI. The company plans to engage with regulators and policymakers to develop guidelines for recursive AI development. Socher has emphasized that the goal is not to create an uncontrollable intelligence but to build a tool that amplifies human capabilities responsibly. The coming years will reveal whether this ambitious project can deliver on its promise or if it will remain a theoretical pursuit.

Pope Leo XIV Warns AI Warfare Fuels 'Spiral of Annihilation'

During a visit to Europe's largest university, Pope Leo XIV condemned the rise of AI-directed warfare, warning it leads to a 'spiral of annihilation.' He called for peace in conflict zones like the Middle East and Ukraine.

ROME — Pope Leo XIV on Thursday delivered a stark warning against the increasing reliance on artificial intelligence and high-tech weaponry in modern warfare, stating that such developments are driving the world toward a 'spiral of annihilation.' Speaking at Europe’s largest university, the pontiff urged global leaders to prioritize peace over technological escalation in conflicts, specifically calling for an end to hostilities in the Middle East and Ukraine. His remarks come amid growing international debate over the ethical implications of autonomous weapons systems and AI-driven military strategies.

The Pope emphasized that the unchecked advancement of AI in military applications risks dehumanizing conflict, making it easier for nations to engage in warfare without fully considering the human cost. 'When machines make life-and-death decisions, we lose sight of the sacredness of every human life,' he said, adding that such technology could lead to rapid, uncontrollable escalation. He referenced recent conflicts where AI has been used for drone strikes, surveillance, and targeting, noting that these tools often operate with minimal human oversight.

Pope Leo XIV specifically highlighted the situations in Ukraine and the Middle East, where both state and non-state actors have employed AI-enhanced technologies. In Ukraine, both sides have used drones and AI for reconnaissance and precision strikes, while in the Middle East, autonomous systems have been deployed for border security and targeted operations. The Pope argued that these technologies, while potentially reducing immediate risks to soldiers, create new forms of instability and mistrust among nations.

The pontiff's address also touched on broader ethical concerns, comparing the current arms race in AI to the nuclear arms race of the 20th century. He warned that without international regulations, the development of AI weaponry could lead to a new era of conflict where wars are fought by algorithms rather than human judgment. 'We must not repeat the mistakes of the past, where technological superiority was pursued at the expense of human dignity,' he stated.

Pope Leo XIV called for a global treaty to ban autonomous weapons that operate without meaningful human control, echoing proposals from various non-governmental organizations. He urged scientists, engineers, and policymakers to consider the moral dimensions of their work, emphasizing that technology should serve peace, not war. 'Innovation must be guided by conscience, not just by what is possible,' he said.

Reactions to the Pope's speech were mixed, with some military experts arguing that AI can actually reduce civilian casualties by improving targeting accuracy. However, human rights groups and ethicists praised the pontiff's stance, noting that the Vatican has historically played a role in advocating for disarmament. The Pope's remarks are expected to influence Catholic leaders worldwide and could put pressure on governments to engage in more robust discussions about AI regulation.

As the global community grapples with the rapid pace of AI development, Pope Leo XIV's warning serves as a moral call to action. The Vatican has not yet proposed specific policy measures but has indicated it will continue to engage with international bodies on the issue. The Pope concluded his speech with a plea for dialogue and diplomacy, stating that 'peace is not a product of technology, but of human hearts and minds.'

Anthropic Forecasts AGI Arrival by 2028 Amid Intensifying US-China AI Competition

Anthropic has predicted that Artificial General Intelligence (AGI) could be achieved as early as 2028, highlighting the accelerating race between the United States and China. The forecast comes amid heightened geopolitical tensions and follows President Trump's recent visit to Beijing.

Anthropic, the artificial intelligence company behind the Claude chatbot, has released a new report forecasting that Artificial General Intelligence (AGI) could become a reality by 2028. The prediction arrives at a time when the global AI race between the United States and China is intensifying, with both nations investing heavily in advanced AI research and development. The report follows President Donald Trump's recent visit to Beijing, which included discussions on technology and innovation, underscoring the geopolitical significance of AI progress.

According to Anthropic's analysis, AGI—defined as an AI system capable of performing any intellectual task that a human can—may be achieved within the next five years. The company cites rapid advancements in large language models, reinforcement learning, and computational power as key drivers. Anthropic's own models, such as Claude, have demonstrated increasing capabilities in reasoning, coding, and creative tasks, suggesting that the path to AGI is accelerating.

The report outlines several technical milestones needed for AGI, including improved generalization across domains, long-term memory, and autonomous goal-setting. Anthropic emphasizes that current AI systems still lack robust common sense and fail at tasks requiring deep understanding of physical reality. However, the company believes that scaling existing architectures and incorporating new training techniques could bridge these gaps by 2028.

This forecast places Anthropic alongside other AI labs like OpenAI and DeepMind, which have made similar predictions. OpenAI has suggested that AGI could arrive within the next decade, while DeepMind's Demis Hassabis has estimated a 50% chance by 2028. The convergence of these timelines indicates growing confidence in the field. However, skeptics argue that AGI remains a distant goal due to fundamental challenges in reasoning and consciousness.

The US-China AI rivalry adds another layer of urgency. The US currently leads in foundational research and chip design, but China excels in data scale and government-backed deployment. Trump's Beijing visit included talks on AI safety standards and potential cooperation, though tensions remain high over export controls on advanced semiconductors. Anthropic's report notes that whichever nation achieves AGI first could gain significant economic and military advantages.

For consumers and businesses, the arrival of AGI could transform industries from healthcare to transportation. Anthropic envisions AGI systems that can conduct scientific research, manage complex logistics, and even provide personalized education. However, the company also warns of risks, including job displacement, misuse in autonomous weapons, and the challenge of aligning AGI with human values. Anthropic advocates for proactive regulation and transparency.

Currently, Anthropic's Claude is available in over 100 countries, but the company has not announced a timeline for AGI deployment. Pricing for Claude's advanced tiers ranges from $20 per month for individuals to custom enterprise plans. The report does not specify which regions might see AGI first, but likely early adopters include the US and China, where infrastructure and talent are concentrated.

Despite the bold prediction, many unknowns remain. Anthropic acknowledges that unforeseen technical hurdles could delay AGI beyond 2028, and that societal readiness is equally important. The company plans to release follow-up reports detailing safety protocols and benchmarks. The next few years will be critical in determining whether Anthropic's forecast holds true or if AGI remains a more distant ambition.

Trump Reveals AI Safety Talks and Nvidia Chip Discussions with Xi Jinping

US President Donald Trump disclosed that he and Chinese leader Xi Jinping discussed artificial intelligence guardrails and Nvidia's H200 chips during a two-day summit in Beijing, highlighting the growing importance of AI regulation and semiconductor technology in US-China relations.

US President Donald Trump has revealed that he engaged in discussions with Chinese President Xi Jinping regarding the establishment of guardrails for artificial intelligence, as well as Nvidia Corp.'s H200 chips, during a two-day summit held in Beijing. The talks underscore the increasing significance of AI governance and advanced semiconductor technology in the bilateral relationship between the two global powers. Trump's disclosure came during a press briefing where he emphasized the need for international cooperation on AI safety standards.

The discussions on AI guardrails focused on developing frameworks to ensure the safe and ethical deployment of artificial intelligence technologies, which have seen rapid advancements in recent years. Both leaders acknowledged the potential risks associated with AI, including issues related to privacy, security, and job displacement. The H200 chips, which are high-performance processors designed for AI workloads, were also a key topic, reflecting the strategic importance of semiconductor supply chains in the tech sector.

Nvidia's H200 chips are among the most advanced AI accelerators available, capable of handling massive computational tasks required for training large language models and other AI applications. The chips are subject to export controls imposed by the US government, which has sought to limit China's access to cutting-edge semiconductor technology. Trump's mention of the chips suggests that the topic of technology transfer and export restrictions was a point of discussion during the summit.

The meeting between Trump and Xi marks a continuation of high-level dialogue on technology and trade between the two countries. Previous summits have covered a range of issues, including tariffs, intellectual property rights, and cybersecurity. The inclusion of AI guardrails in the agenda indicates a growing recognition of the need for global norms in AI development, especially as nations race to achieve dominance in this field.

Industry experts have noted that the discussions could have significant implications for tech companies like Nvidia, which rely on global markets for their products. The US government has previously imposed restrictions on the sale of advanced chips to China, citing national security concerns. If the talks lead to a relaxation of these controls, it could open up new opportunities for Nvidia and other semiconductor firms in the Chinese market.

For users and businesses, the outcome of these discussions may influence the availability and cost of AI technologies. Stricter guardrails could lead to more transparent and accountable AI systems, benefiting consumers through enhanced safety and reliability. However, any changes in export policies could affect global supply chains, potentially leading to price fluctuations for AI hardware and software.

The specific details of the agreements reached during the summit have not been publicly disclosed, and it remains unclear whether any concrete actions will be taken. Both leaders are expected to continue discussions through diplomatic channels, with further meetings likely to address additional aspects of AI governance and technology trade. The international community will be closely watching for any formal announcements or policy changes that may emerge from these high-level talks.